29th Annual RESNA Conference Proceedings

Dual User Interface Design As Key To Adoption For Computationally Complex Assistive Technology

Stefan Carmien, Anja Kintsch

University of Colorado, Center for LifeLong Learning and Design, Boulder, CO USA

ABSTRACT

Highly complex assistive technologies hold much promise for supporting independence for persons with communicative and cognitive disabilities. However the very complexity that provides the power is perhaps the reason for their very high abandonment rate. Part of the solution is designing with dual user interfaces, one for the person with disabilities, and one for caregivers who configure the devices. This paper describes the design and testing of such a system: MAPS ( Memory Aiding Prompting System). This unique challenge and its broader implications are presented .

KEYWORDS:

Assistive Technology, Usability, Design, ADL support

INTRODUCTION

Designing artifacts that enable people with disabilities to complete activities of daily living are the domain of rehabilitation engineers and assistive technology professionals. Yet, however exquisitely designed and executed a piece of assistive technology (AT) is, if it is not adopted and used by the population it is designed for, it is a failure. Many ATs require a design approach that treats these complex devices as having two equally critical interfaces, one for the user with a disability and another for the teacher, parent, or other caregiver to personalize or customize the AT for successful use. It is the problems of adoption and abandonment that the approach of dual user interface is intended to ameliorate.

Assistive technology spans a range from aids that enable access to buildings to hand held prompting devices for those whose executive and memory functions are limited. As the AT artifact attempts to aid with more complex compensatory acts, the configuration of the device (and subsequent re-configuration as needs and abilities change) becomes more and more critical for the final adoption by the end user [1] .

ABANDONMENT CAUSES AND USER EXPERIENCE

While AT devices can have a profound positive impact on the daily life of persons with disabilities, many initially adopted devices and systems are unfortunately abandoned. An estimated 13 million AT devices are used in North America alone [2] . Studies have reported abandonment rates that range from 8% for life saving devices to 78% for hearing aides [1] . Studies of causes of abandonment have noted that "change in the needs of the user" are a high predictor for abandonment [3,4] ; such changes might be accommodated by technology that is easier to re-configure to the new needs of the user or situation.

AT tools can be divided into two categories: (1) those that work out of the box or need initial configuration with only minor adjustment over time (i.e. wheelchairs or adapted mice or keyboards for the computer), and (2) those that need initial configuration and subsequent re-configuration (i.e. AAC or computationally based prompters). Adjusting these devices to the changing needs of the user as well as to changes in the task and environment is challenging for the typical caregiver. Examples of these complex tools include the Dynavox and Mercury communication systems, the Visions prompting system, and the AbleLink pocket coach. This paper addresses the design problems of the second type of AT.

ANALYZING TOOLS IN AN EXISTING AT NICHE, A SCENARIO

John and Maria have a young son, Mark, with Down's Syndrome. While overall a happy boy he does struggle with most of the tasks he is faced, particularly communication. On the advice of an AT specialist John purchased a small handheld communication device with a dynamic display giving Mark the potential to express a nearly limitless number of messages.

Once the device arrived John realized he was faced with a monumental challenge, He needed to spend many evening hours learning just how to program the device. John asked Mark's teachers for messages Mark might want to communicate. His teachers were happy to oblige, but had never worked with an AAC device. They sent John the types of things he was working on such as word and letter recognition, color matching, and tracing. The device allowed for 20 messages to be displayed on each page, buttons could also be programmed to lead to additional pages. John programmed some 40 different pages. While Mark quickly learned where most of the buttons were, he did not use the device. John continued to add content to the device hoping that new messages might spark his interests. But this did not help Mark use it.

John, Maria and the school team then called on an AT specialist to look at the problem. She recognized several difficulties with the way in which the device was programmed. Because John had put the same ten link buttons on each page, related topics, such as animals, had to be spread across two or three different pages making it confusing for Mark. Moreover, Mark needed to have the most important messages, that he needed to say most often, readily available on the home page. In the end John was forced to scrap all the work he had done and start over. But because it is now much more user friendly, Mark has begun using it more readily both at home and at school. Now he chooses to use it.

Learning to program the device should not have taken 10-15 hours of study time to learn. Many parents do not have the patience or time to devote to such a task. Reprogramming the device should have been made easer. John should have been able to rearrange the work he had already completed

DESIGN PRINCIPLES: SYMMETRY OF IGNORANCE AND THE METADESIGN PROBLEM

Computationally based assistive technology devices involve not only the person with a disability, but also her caregivers, her community, and the ever-changing environment. To guide the design of the second (or Caregiver) interface for such devices it is useful to envision that the caregiver and the person with disabilities as reflections of each other. For example, a caregiver who is working with a person with cognitive disabilities in configuring a handheld prompter system supplies the necessary cognitive capacity to envision and create the task prompts. Similarly the caregiver that re-configures an AAC device must have a high level comprehension of the communicative acts that the new set of overlays will facilitate, what they might wish to say, and how communicative messages can best be laid out for ease of access for a particular user. Basically the second interface (the caregivers) has to be concerned with the providing content and structure that is the complement of the disability.

This basis of this dual-interface concept is a version of the concept of symmetry of ignorance [5] , a way of describing situations where several participants in an endeavor each individually have parts of the knowledge needed to accomplish the task, but neither have enough to accomplish the tasks independently. An end-user may know exactly what an application needs to do, but be unable to program, while a programmer may know how to develop robust applications but in isolation creates unusable software.

The configuration and reconfiguration of AAC and prompter systems require the caregiver to become a programmer of the device, and therefore the design task for the AT developer is to design tools that facilitate programming by the non-programmer. This programming can span from configuration of and layering message screens to the creation of multimedia prompting. This is an example of a metadesign problem [6] . Designers of metadesign tools need to have sufficient domain expertise to accommodate likely situations, yet create a tool that is 'under-built' enough to support the personalization that the end users and their tasks require.

MAPS

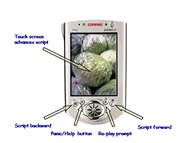

MAPS ( Memory Aiding Prompting System) [7] is a hand held prompting system (see Figure 1 and 2), that prompts a person with cognitive disabilities through tasks that were previously difficult or impossible for them to complete independently due to difficulties with memory or executive functions. MAPS is a script editing tool, designed to allow a caregiver with minimal computer skills to create, store and deliver scripts representing tasks, and a hand held prompter used by the person with cognitive disabilities.

MAPS handheld prompter 'plays' the visual and verbal cues that guide the successful completion of a chosen task (figure1). The images that appear on the small screen are personalized for the user, usually a photograph of the task steps, accompanied by verbal prompt describing the action to be taken. The controls on the handheld computer have been simplified to a minimal set. The caregiver can modify the placement and function of these controls to fit the user.

The MAPS caregiver interface provides the tools and support for creating, annotating, modifying, and storing scripts to be used in the MAPS handheld prompter (figure 2). The process of preparing the MAPS system for use by a person with cognitive disabilities consists of selecting appropriate task to be prompted, segmenting the task into appropriately sized cognitive chunks, collecting and preparing the images and verbal prompts to cue the segments of the task, and finally using the script editor to assemble, store and load the finished script to the hand held prompter.

The design process for the script editor emphasized re-using exiting computer skills, basing the applications cognitive model on a familiar metaphor (filmstrip and MS PowerPoint), and performing several iterations of user testing. Caregivers, selected for low computer skills, participated in user testing and were probed for their understanding of the applications conceptual model (which may vary from the actual data/program model). We did three iterations of user testing, each with between three and eight users. Each round resulted in non-trivial design changes. The over arching goal was to allow initial easy successful script generation while supporting complex annotated scripts as the user became more skilled.

CONCLUSIONS

Thinking about high functioning AT from the perspective of dual-user interfaces from the beginning of the process can enable designs to have a better chance of continued use. In the MAPS example, while the system is still a research vehicle, the feedback we have received from assistive technologists and caregivers, particularly in contrast with other systems that they have encountered, is markedly positive.

Contact:

Stefan Carmien

430 UCB, ECOT 717

University of Colorado

Boulder, CO USA

carmien@l3d.cs.colorado.edu

303-735-0223

REFERENCES

- Scherer MJ. Living in the State of Stuck: How Technology Impacts the Lives of People with Disabilities. Cambridge: Brookline Books; 1996.

- LaPlante ME, Hendershot GE, Moss. AJ. The prevalence of need for assistive technology devices and home accessibility features. Technology and Disability 1997;6:17-28.

- Betsy Phillips HZ. Predictors of Assistive Technology Abandonment. Assistive Technology 1993;5(1).

- Reimer-Reiss M. Assistive Technology Discontinuance. Technology and Persons with Disabilities Conference; 2000.

- Fischer G, Ehn P, Engeström Y, Virkkunen J. Symmetry of Ignorance and Informed Participation. In: Binder T, Gregory J, Wagner I, editors. Proceedings of the Participatory Design Conference (PDC'02); 2002; Malmö University, Sweden. CPSR. p 426-428.

- Fischer G, Giaccardi E, Ye Y, Sutcliffe AG, Mehandjiev N. Meta-Design: A Manifesto for End-User Development. Communications of the ACM 2004;47(9):33-37.

- Carmien S. 2005. MAPS Website. <www.cs.colorado.edu/~l3d/clever/projects/maps.html >

This should be in the right column.