Engaging Children In Social Behavior: Interaction With A Robot Playmate Through Tablet-Based Apps

Hae Won Park, Ayanna M Howard

School of Electrical and Computer Engineering, Georgia Institute of Technology, Atlanta, GA

ABSTRACT

There has been an emerging use of touchscreen-based smart devices, such as the iPad, for assisting in education and communication interventions for children with Autism Spectrum Disorder (ASD). There has also been growing evidence of the utilization of robots to foster social interaction in children with ASD. Unfortunately, although interventions using the tablet have been successfully implemented in the home environment, the robotic platforms have not. One of the reasons is due to the fact that these robotic platforms are typically not autonomous, i.e. they are typically controlled directly by the clinician or through pre-scripted behavior. This makes it difficult for immersion of such platforms in an environment outside of the clinical setting. As such, to capitalize on the widespread ease-of-use of tablet devices and the emerging success found in the field of social robotics, we present efforts that focus on designing an autonomous interactive robot that socially interacts with a child using the tablet as a shared medium. The purpose is to foster social interaction through play that is directed by the child, thus moving toward behavior that can be translated outside of the clinical setting

BACKGROUND

Socially assistive robotics, defined as robots that provide assistance to human users primarily through social interaction (Feil-Seifer, 2008), continues to grow as a viable method for robot-assisted therapy. Through the use of social cues, socially assistive robotics can enable long-term relationships between the robot and the child that drastically increases the child’s motivation to complete a task (Kidd, 2008). Recently there has been growing interest in research involving therapy through play between robots and children with pervasive developmental disorders, such as Autism Spectrum Disorder (ASD) (Howard, 2013). While typically developing children possess the ability to imitate others from birth, children with ASD demonstrate significant difficulty in object and motor imitation. Studies involving therapeutic play between robots and children with ASD have thus been of particular interest for several reasons. First, based on a clinical evidence-base, it has been shown that children with Autism are capable of learning and of altering their behaviors when teaching is provided using clear instructions, repetition and practice, and immediate reinforcement of correct responses. Robots in their basic incarnation are well suited to provide consistent actions in a repetitive fashion (Scassellati, 2012). It has also been shown that children with disabilities naturally find robots to be engaging and respond favorably to social interactions with them, even when the child typically does not respond socially with humans (Robins, 2009). The difficulties in this domain still lie in the ability to provide long-term, continuous interactive behavior between the child and the robot.

Tablets have also been shown to provide an engaging experience for children with disabilities in addressing a range of learning and therapy opportunities (Lopez, 2012; Shah, 2011). In this domain, a few efforts have focused on integrating robots and tablets to engage children in the interaction. Popchilla (Popchilla, 2013) is a robotic platform, controllable through the iPad, that engages children with ASD through movements and facial expressions. In (Baxter, 2012), there was a focus on teaching children, through interaction with a robot, how to characterize a set of objects on a touchscreen. Finally, in (Park, 2013), a method was shown for using the tablet as a shared medium for human-robot interaction studies. Unfortunately, in most of these cases, the robot still functions as a tele-operated device (i.e. remote-controlled) by the tablet.

In other work, studies have shown that when children are required to teach others, they themselves become more engaged in the task (Gartner, 1971). As such, to further research in the use of robotics for therapy applications, especially as applied to children with ASD, we focus on devising a method of interaction through learning. The role of robot learning for child-based engagement in therapy is to increase the duration of the child’s interaction by incorporating the concept of turn-taking. In this work, we utilize an approach in which a social robot observes a child’s interaction during game play, generates an appropriate behavior, and then engages with its child partner as a learner. This learning response is accomplished by utilizing a mimicking process in which the child and robot take turns in accomplishing a goal, thereby motivating and stimulating the social behavior of the participant. In this paper, we provide an overview of the system and assess the ability of our socially interactive learning robot to engage children in social behavior.

PURPOSE

The purpose of the study discussed in this paper is to answer the following research questions:

- Is there a difference in the emergence of social behavior initiated by a child when conducting a task with a person or with a robot?

- Does the length of social behaviors between a comparison group of typically-developing children and children with ASD differ?

METHOD

Subjects

We recruited 19 school-age children (mean age m=12.21, standard deviation σ=4.16) including 7 girls (m=12.71, σ=3.86) and 12 boys (m=11.08, σ=4.56) to teach our social robot how to play a tablet-based gaming App. Sessions were conducted at various times during a two-month period.

Experimental Setup

Gaming App:

Due to its familiarity with most children, we utilized a version of Angry Birds, a popular gaming App on both Android and iOS platforms, for the study (Figure 1). In this version of Angry Birds, four levels, ranging in difficulty, were coded up for enabling interaction with the social robot. The game’s objective is to shoot a bird to destroy all enemies either by directly aiming at them or knocking down the surrounding structures to collapse them. Social behaviors of the participants were measured as the length of time when eye contact was made or when vocal- or gestural- interaction behaviors were observed.

Social Robot:

We utilized the DARwIn-OP platform (Darwin) (Ha, 2011) as our socially assistive robotic agent (Figure 2). Darwin is 45cm (18 in) in height, weighs 2.9kg (6.4 lb), and has 3 degree-of-freedom (DoF) arms, 6-DoF legs, and a 2-DoF head with LEDs embedded in its eyes. To enable interaction with the child, Darwin is programmed with a range of verbal and gestural behaviors that are coupled with emotion indicators using the LED in its eyes. The behaviors are grouped into positive, negative, neutral, and idle states. The combinations of verbal and gestural behaviors are randomly generated within each behavior group. These groupings are based on prior studies that examined the effect of different behaviors on engagement (Brown, 2013). The robot is also induced with a passive personality, meaning Darwin retracts its motions and waits for his turn whenever its human teacher reaches out to provide demonstrations or interact with the tablet.

Procedures

Participants were asked to participate in two sessions involving interaction with the gaming App. Touch-based gestures with the tablet were logged, and two video cameras were placed to record the sessions. The log and videos were later used for system evaluation. In the first session, Session I, participants were asked to interact with the game, without the robot learner, while the experimenter was present. The goal of Session I was to collect baseline data for evaluating differences when interacting with a person versus with a robot. In Session II, the participants were asked to interact with the robot by teaching the robot learner how to play the game. In both sessions, it was the participants’ first time interacting with the experimenter and the robot. The structure of the Angry Darwin game makes various strategies possible to complete each level within a given number of attempts. The instructions given by the experimenter to the participants was strictly scripted to avoid any influence it might cause to the participant’s experience. The script was as follows:

Now, I’d like you to teach Darwin to play the same game. Just teach him in the same manner you would teach your younger sibling. Provide Darwin with demonstrations on how to solve each level. Whenever you reach out to provide a demonstration to Darwin, he will wait for his turn. Continue teaching each level until you are satisfied that Darwin has learned the level well enough, or you think Darwin has stopped learning. Later, I want you to show me what you have taught Darwin, and collaboratively solve each level with him. Darwin may or may not try to communicate with you, and he may not use human language. Afterwards, I will ask you some questions about your experience teaching a task to Darwin.

To address the first objective of determining if there is a difference in the emergence of social behavior initiated by an individual when conducting a task with a person or with a robot, social behaviors initiated by the participants were measured as the length of time when eye contact was made or when vocal- or gestural- interaction behaviors were observed. To address the second objective of whether the length of social behaviors differs between typically-developing children and children with ASD, we also conducted a pilot study with two children diagnosed with Autism Spectrum Disorder.

RESULTS

|

Session I |

Session II |

||||

Female |

Male |

Combined |

Female |

Male |

Combined |

|

Avg. total time of interaction (min) |

9.58 |

8.35 |

8.80 |

29.75 |

24.56 |

26.47 |

Avg. time of initiated social behaviors (min) |

0.66 |

0.51 |

0.56 |

13.60 |

8.31 |

10.26 |

Percentage of social behavior |

6.84% |

6.13% |

6.42% |

45.71% |

33.83% |

38.75% |

Social-behavior Category |

Female |

Male |

Combined |

Eye contact / Gaze |

38.49% |

30.91% |

34.05% |

Gestural interaction |

14.85% |

17.17% |

16.21% |

Vocal interaction |

29.21% |

20.87% |

24.32% |

First, the various forms of interaction that participants utilized while teaching the robot were decoded. These natural forms of interaction, e.g. the length of time when an eye contact was made, were observed and then categorized into instructive and non-instructive interactions, as depicted in Table 1. On average, participants spent 8.80 minutes with the experimenter and 26.47 minutes with the robot playing the game (Figure 3). The more significant measurement is the ratio of how much social interactions were initiated during these sessions. Compared to 6.42% social-behavior occurrence in Session I, participants dedicated 38.75% of their time initiating interaction when the robot was present in Session II. A detailed break down of the social interactions toward the robot is depicted in Table 2. Note that these cues are often observed simultaneously with one another, and the measurement ratio is calculated against the total time of the interaction. It is also worth noting that girls spent 35.09% more time with the robot than boys, and they initiated significantly more conversation (39.96% more) while maintaining their eye contact with the robot 24.52% more than the boys. On the other hand, boys initiated 15.62% more gestural interactions and provided 18.50% more demonstration of the task than girls.

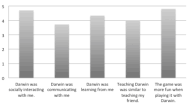

Following each session, participants were asked a number of questions concerning their interaction experience with the robot. On a 5-point Likert scale, from strongly disagree (1) to strongly agree (5), the post-experiment survey reports that the participants felt their robot was socially interacting with them (m=4.7); was socially communicating with them (m=3.72); thought Darwin was learning from them (m=4.33) similar to their friends (m=4.01); and thought the robot enhanced their overall experience with the virtual game (m=4.8) (Figure 4).

From the pilot study with two children with ASD, the first participant (male, age 9) demonstrated close to average occurrences of social behaviors when the robot was present compared to the typically developing group. In Session I, the child initiated an interaction with the experimenter, which was 45% of the average time of the typically developing group. In Session II, the amount of time spent initiating social behaviors toward the robot was 91% of that of the combined comparison group. When compared to the boy’s comparison group alone, he initiated 3.78% more social behaviors. The observed behaviors were: eye contact (28.23%), gestural interaction (12.17%), and vocal interaction (28.90%). The second subject (male, age 6) eagerly participated in the task but did not initiate any interaction with the experimenter or the robot. He spent most of the session observing the robot and talking to himself, but also talking to his parent about the robot (28.14%). Though his interaction wasn’t aiming toward the robot, the robot’s behavior mediated a conversation with his parent and demonstrated 73% of the average time of the comparison group.

DISCUSSION AND FUTURE WORK

In this study, our goal was to assess the ability of a social learning robot to engage children in exhibiting social behavior. The results of our study show that the child participants were motivated to teach the robot, and this process naturally fostered the emergence of social behaviors. It was also shown that the tablet provided an intuitive environment for task engagement with the robot. The pilot studies with children with ASD, as discussed in this paper, also provides some preliminary evidence in understanding both the limitations of the system, as well as those attributes that are essential for establishing long-term interaction for engagement. Future efforts will focus on enhancing the autonomy of the system such that the gaming App adapts in direct correlation to adaptation of the robot’s social behaviors. This will ensure that both components correlate and grow with the capabilities of the child, as well as ensure the system is continuously engaging. Also, since our focused demographic is children with disabilities, our next set of trials will focus on engaging more children with Autism Spectrum Disorder in the experimental protocol. As part of this future work, we will also study how the aspects of the robot (movement, sound, emotion expression) might affect interaction.

REFERENCES

Baxter, P., Wood, R., & Belpaeme, T. (2012). A Touchscreen based ‘Sandtray’ to facilitate, mediate and contextualise human robot social interaction. In Proc. HRI’12. Boston, MA.

Brown, L, & Howard, A. (2013). Engaging Children in Math Education using a Socially Interactive Humanoid Robot. IEEE-RAS International Conference on Humanoid Robots. Atlanta, GA.

Feil-Seifer, D., & Matarić, M.J. (2005). Defining Socially Assistive Robotics. IEEE International Conference on Rehabilitation Robotics (ICORR-05). Chicago, IL. pp. 465-468.

Gartner, A. (1971) Children Teach Children: Learning by Teaching. Harper & Row, New York.

Ha, I., Tamura, Y., Asama, H., Han, J., & Hong, D. (2011). Development of open humanoid platform darwin-op. In: SICE. pp. 2178–2181.

Howard, A. (2013). Robots Learn to Play: Robots Emerging Role in Pediatric Therapy. 26th Int. Florida Artificial Intelligence Research Society Conference, May.

Kidd, C., & Breazeal, C. (2008). Robots at home: Understanding long-term human-robot interaction. IROS. pp. 3230–3235.

López, A.F., Rodríguez-Fórtiz, M.J., Rodríguez-Almendros, M.L, & Martínez-Segura, M.J. (2012). Mobile learning technology based on ios devices to support students with special education needs. Computers & Education.

Park H.W., & Howard, A. (2013). Providing tablets as collaborative-task workspace for human-robot interaction. 8th ACM/IEEE International Conference on Human-Robot Interaction. pp. 207-208. Tokyo, Japan.

Popchilla’s World. (2012). URL: http://popchillasworld.com/ [Online accessed: December 2013].

Robins J.B., Dautenhahn K., & Dickerson P. (2009). From isolation to communication: a case study evaluation of robot assisted play for children with autism with a minimally expressive humanoid robot. Proc. Second Inter. Conf. Advances in CHI (ACHI’09):205-211.

Scassellati B., Admoni H., & Matarić M. (2012). Robots For Use in Autism Research. Annual Review of Biomedical Engineering.14: 275-294.

Shah, N. (2011). Special education pupils find learning tool in ipad applications. Education Week. vol. 30. no. 22. pp. 1–16.

ACKNOWLEDGEMENT

This research was partially supported by the National Science Foundation under Grant No. 1208287.

Audio Version PDF Version