XFACT: DEVELOPING USABLE SURVEYS FOR ACCESSIBILITY PURPOSES

Drew Williams1, Nadiyah Johnson1, Amit Kumar Saha1, Nathan Spaeth2, Tereza Snyder2, Dennis Tomashek2, Sheikh Iqbal Ahamed1 and Roger O. Smith2

1 Math, Statistics and Computer Science Department, Marquette University, Milwaukee, WI

2 R2D2 Center, University of Wisconsin-Milwaukee, Milwaukee, WI

ABSTRACT

Accessible structures and environments can mean the difference between an exemplary quality of life and a poor one for millions for Americans. However, determining accessibility of a particular built environment can be a difficult task - for any given environment, there are many types of measurements to be taken. Delivering the information to be considered for a particular environment in an effective and efficient manner is an interesting problem in and of itself. To solve this, we have created xFACT - an application that assists in the development of evaluations using a unique trichotomous, tailored, sub-branching scoring system. Creating surveys using this rich type of scoring system allows for users to develop detailed evaluations of environments that remain pleasantly easy to understand.

INTRODUCTION

Although we can admit that accessibility is a must in today’s world, many struggle with understanding what exactly it means for an environment to be accessible. Laws and guidelines exist for accessibility, but can often be confusing to understand. Often for individuals to use said guidelines for the purpose of evaluating environments, extensive training is required.

A method of developing consistently-styled evaluations for accessibility would greatly help here. Properly designed evaluations could convey all the information about required accessibility features that one would need to include in their assessment of the environment, without requiring extensive training on the part of the user. The system of developing these evaluations should also be flexible, so it can be used to create a multitude of evaluations, but create evaluations that looked consistent in appearance.

In this paper, we discuss the development of xFACT, an application used to create usable, easy to understand assessments for a variety of purposes, including accessibility. We begin by discussing work previously completed in regards to xFACT, including previous projects utilizing its unique scoring system. Next, we discuss our proposed approach to developing xFACT, and walk through the development of the application. We then discuss what xFACT achieves, followed by concluding statements.

PAST WORK

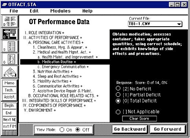

a “Yes” or “No” - any partial (“Maybe”) responses resulted in the next level of sub-questions being asked, to obtain more information from the user. This method of assessment ensured speedier completion of surveys, by not requiring users to answer, or even consider, any questions not directly relevant to them at a particular time.

In time, additional taxonomies were developed and displayed via apps similar to OT Fact, using the same style of structure that OT Fact did. For example, Trans-FACT worked to assess public transportation, (Rehabilitation Research Design and Disability Center, 2009) while RATE-IT assessed the accessibility of restaurants. (Park, 2011) In recent years, an additional application for iPad, Access Tools, has taken up using a structure similar to that of OT FACT used as well. (Williams, 2015) However, it should be noted that each of these taxonomies worked within separate and individual applications.

PROPOSED APPROACH

In the past, a new taxonomy meant that a new application would be built. However, we propose instead the development of a single system, that can import new taxonomies and create software evaluations that utilize the TTSS method. In doing this, we can create consistent surveys that assist the user in evaluating the environment for different accessibility-related criteria. In particular, our ideal system would have a number of features, including the following:

Flexibility

Our application should not be limited to one particular sort of taxonomy, but rather be flexible and allow for different sorts of taxonomies to be implemented. No matter the content, these new taxonomies should be able to be displayed in a consistent format, presenting their content as a new survey for users to fill out. Different answer sets may be required for new surveys as well.

In addition to flexibility in terms of survey type, our system should be flexible in terms of what sort of operating system it will run on as well. The ability for a user to use a tablet in the field, and follow up with reporting on their finds at their desktop would be ideal. For this reason, our system should be developed with multiple platforms in mind from the get-go.

Efficiency

A user should be able to complete their particular survey questions as quickly as possible, without being bogged down by superfluous or irrelevant questions. Designing an efficient assessment experience could be difficult due to the amount of information that may need to be considered in the process of completing a survey. In addition, a user should be able to make new taxonomies in an efficient manner, without having to rely on proprietary tools.

Usability

If these criteria can be fulfilled, we will have succeeded in creating a flexible, usable and efficient application for generating surveys.

METHODOLOGY

In its current state, xFACT has been developed as a desktop application, running on current Windows and Macintosh operating systems. As a desktop application, xFACT allows users to navigate and complete surveys using both a mouse and their keyboard. Future versions of xFACT will also run as a web application and a mobile application - in these cases, xFACT will adapt to allow for input methods appropriate for the OS - for example, touch input on smart phones and tablets.

Upon starting, xFACT allows the user to access a number of pre-built taxonomies. Each of these pre-built taxonomies give the user the ability to save and load surveys using the particular taxonomy - for example if a user were to pick the RATE-IT taxonomy for rating a restaurant for accessibility purposes, they could save and load instances of rated restaurants for later perusal. Taxonomies are crafted using Excel spreadsheets - question hierarchy/branching is determined via indentation. In the future, we plan to add the ability for the user to add their own crafted taxonomies to the system. In any case, no matter the taxonomy chosen the user interface remains the same, and screens for loading, viewing, and saving surveys remain structured in the same way no matter the taxonomy chosen (with changes in color depending on the taxonomy selected).

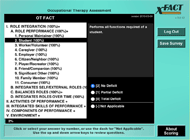

Questions for a taxonomy are scored and parsed according to the aforementioned TTSS format, which refers to how questions are asked, and how questions branch depending on how they are answered. The “trichotomous” portion of the question refers to the answers that can be given for a particular question - “Yes”, “No”, and “Maybe”. In terms of scoring, “Yes” represents 2 points, “No” represents 0 points, and “Maybe” represents 1 point. If a “Yes” or “No” is selected as an answer for a question, the user continues to the next section of the same hierarchy. The question receives either full points (if “Yes”) or no points (if “No”) for scoring. However, if the answer is marked “Maybe”, either 1 point is awarded (if no child questions) or the children of that question become visible, and the user must answer child questions in the same manner. In this way, we tailor the survey to the needs and abilities of the user - if the user is a professional, they may speed through xFACT’s surveys very quickly. If they are less familiar with either the survey system or the survey material, they may take more time, as a result of going through more of the sub-branches of the system. Additionally, for some questions there exists a fourth answer, “Not Applicable.” This answer removes the question entirely from all scoring consideration if selected.

Some questions may simply offer explanatory text and a continue button in lieu of TTSS-style branching and scoring. Additional answer sets are also supported, as needed by surveys. In any case, every question has a short description in an outline format on the left side of the screen, which aligns to more descriptive text on the right side of the screen accompanying an answer set. The description helps a user understand how best to answer a question when going through a survey the first time, and later allows the user to scan the shorter description and (if they remember the context) answer the question quickly without re-reading the detailed text.

As a user moves through the survey, a progress bar and percentages adjacent to each question increase or decrease to reflect progression through the survey and scoring as the user makes changes. Once a survey has been completed, it can be saved and loaded later. Reporting features are not completed in the latest version of xFACT, but we plan to implement such features in the future.

DISCUSSION

xFACT is a very flexible, efficient and usable application for the development and completion of surveys. By allowing taxonomies to be created via Microsoft Excel, we make the creation of taxonomies a simple process, completed without needing tools that users would most likely already have available to them. Allowing users to create their own taxonomies also ensures that xFACT remains flexible to all possible survey needs a user may have. Furthermore, developing xFACT with multiplatform deployment in mind ensures that xFACT will be able to be used across mobile devices and desktops/laptops, even if for now it is constrained to desktops and laptops.

Furthermore, using the TTSS system to complete surveys within xFACT makes for a very efficient workflow. Only applicable questions are even seen; questions that are not relevant to a user’s needs are hidden from view as part of the TTSS structure. Scoring is tallied as a user progresses through the survey. While a real reporting system has not yet been implemented, it too is in the works, and promises to enhance the efficiency of xFACT even further.

Finally, xFACT is designed to be as usable as possible. For one, the application adapts to the input method that the user prefers, be that keyboard input or mouse input. When the application moves to the mobile platform, it will adapt to use touch input as well. xFACT also provides a short and detailed description for each question, so a user can understand what a particular question is asking them, but scan the shortened name for a question if they’re used to completing a particular survey. In addition, xFACT provides a progress bar so a user understands where they are in a particular survey. This is to help reduce confusion on the part of the user, in regards to how much of the survey they have left.

CONCLUSION

xFACT can be used to create usable, efficient surveys for all sorts of use. The system is flexible in the type of content it can take in, and the types of evaluations it can display. However, using the xFACT system to consolidate accessibility information helps us to solve a particularly difficult outstanding problem. Many rules and guidelines are involved in determining if environments are accessible, and as a result much information needs to be condensed. With xFACT, use of a TTSS system helps us condense many accessibility questions into an easy-to-understand survey, that only presents the user with information directly relevant to their situation. This helps the user feel more at ease determining if their environment is accessible or not.

REFERENCES

Park, M., Smith, R. O., & Liegl, K. (2011, June). The Restaurant Accessibility and Task Evaluation Tool (RATE-IT). Presented at the Universal Design Conference of the FICCDAT International Conference. Toronto, Canada.

Rehabilitation Research Design and Disability Center. (2009) Transportation – Functional Assessment Compilation Tool (Trans-FACT). Retrieved 17 Feb. 2016, from http://www.r2d2.uwm.edu/xfact/trans-fact.html

Smith, R. O. (2002). OTFACT: Multi-level performance-oriented software with an assistive technology outcomes assessment protocol. Technology and Disability, 14, 133 - 139.

Williams, D., et al. (2015). ACCESS TOOLS: Developing A Usable Smartphone-Based Tool For Determining Building Accessibility. Proceedings of the RESNA 38th Annual Conference on Technology and Disability. Retrieved 17 Feb. 2016, from http://www.resna.org/sites/default/files/conference/2015/cac/williams.html