Sajay Arthanat. Ph.D., OTR/L., ATP

Associate Professor, Department of Occupational Therapy

College of Health & Human Services

University of New Hampshire

Livia Gosselin

Graduate Occupational Therapy Student, Department of Occupational Therapy

College of Health & Human Services

University of New Hampshire

Background

| Motor Skills | Posture | Seating stability |

| Proximal seating angles | ||

| Distal angles | ||

| Coordination | Forward reach | |

| Text entry | ||

| Mouse pointing | ||

| Scrolling | ||

| Movt between mouse and keyboard | ||

| Mobility | Movt of distal extremities for keyboard activation | |

| Movt of distal extremities for mouse activation | ||

| Activate two keys simultaneously | ||

| Grasp | ||

| Manipulation | Turn on computer | |

| Insert any hardware | ||

| Item or text selection with mouse | ||

| Drag and drop | ||

| Keyboard shortcuts | ||

| Hold mouse and scroll | ||

| Swipe touch pad | ||

| Endurance | Persistence with the task | |

| Sensory Skills | Visual Skills | Can read text |

| Locate ,identify and relocate icons | ||

| Locate ,identify and relocate items in menu | ||

| Scanning-Left to right | ||

| Scanning-Top to bottom | ||

| Locate specific items or lines within text | ||

| Focus on moving targets | ||

| Switch focus on moving targets | ||

| Resolution | ||

| Computer Equipment (Device) | Monitor | Placement |

| Size | ||

| Keyboard | Letter Spacing | |

| Visibility | ||

| Size | ||

| Button Location | ||

| Mouse | Cursor fluidity | |

| Computer set up | Work Station | Seating equipment |

| Lighting | ||

| Noise | ||

| Space and approach |

Assistive technology control interfaces are required by individuals with physical disabilities in accessing computers to perform tasks pertaining to daily living, education, employment, social participation and leisure. These interfaces include a wide array of standard and adapted keyboards, devices for mouse control, speech recognition systems, on-screen keyboards, direct or indirect selection switches and brain interfaces. Selection of an optimal interface by an AT provider is reliant on the evaluation of the individual's motor and process skills and identification of an ideal body site matched to his or her context and computer access needs. With limited clinical evidence and standardized evaluation tools, most AT providers rely on experience and arbitrary methods for selection of control interfaces.

Purpose

This presentation will highlight the Usability Scale for Assistive Technology-Computer Access (USAT-CA), an observation tool designed to ease evaluation and selection of control interfaces for access of computers by individuals with disabilities. Based on the Human Activity Assistive Technology model (Cook & Polgar 2015) and the USAT measurement framework (Arthanat et al., 2007), the tool takes into consideration the interaction of the individual’s motor and sensory skills with the computer equipment and the influence of the computer set up. Focus of this presentation will be on the tool’s methodological development, i.e., validity and reliability.

Methods

This psychometric study is being conducted in three phases. In phase I, a draft version of the USAT-CA was developed through measurable indicators identified in earlier research (Arthanat et al., 2007) as well as through a task analysis of individuals with disabilities interacting with their computer. In phase II, four highly experienced RESNA certified computer access AT providers were chosen as experts to pilot test and complete the USAT-CA. Their experience in providing computer access AT services ranged from 8, 15 and 27 years of experience, and correspondingly 90%, 75% and 50% of their time was devoted to direct client service in this area. The protocol involved having them observe videos of two individuals with disabilities performing routine tasks on their computers. One of the individuals experienced a spinal cord injury at cervical C4-C5 level, and the other had a rare form of muscular dystrophy. For the content validation following the evaluations, the experts were asked how well the USAT-CA measured the skills needed to interact with computer, the effectiveness of the computer equipment and the set up. An overall content validation section included the comprehensiveness, clarity, ease of use and value associated with the tool. Experts were also requested to suggest necessary revisions to the tool’s pilot version.

Based on recommendations of the expert providers, an online field testing of the revised USAT-CA is scheduled to be completed in Phase III. Thirty RESNA certified computer access ATPs have been recruited and the field testing is scheduled for completion in March 2017. The providers will observe the above videos and complete the revised USAT-CA by rating the motor and process skills of the individual to control the interface, interface placement, appropriateness of the motor site(s), and environmental factors. A shorter version of the same content validation questionnaire will also be filled by the AT providers. Descriptive analysis will be conducted to examine content validity.

Preliminary Results

Results for this submission are mostly based on phase I and phase II- pilot testing and content validation of the USAT-CA, which has been completed by three out of the four experts. The remainder of the study data is scheduled to be collected, analyzed and included in the conference presentation. For the time being, based on a Cohen’s Kappa analysis the pilot testing indicates modest, yet mostly significant, agreement among the three raters for the 40 items evaluated in the USAT-CA. The 40 items had a 7-point rating scale including two nominal choices (Not applicable & Cannot be observed). The items are included in Table 1 and the agreement scores are listed in Table 2. For participant 1, the overall agreement between the experts on their evaluation ratings ranged from 0.2 to 0.35. The agreement between expert 1 & 2 for participant 2’s evaluation was 0.20, and 0.25 for expert 2 & 3. There was poor (non-statistically significant) agreement between expert 1 and 3.

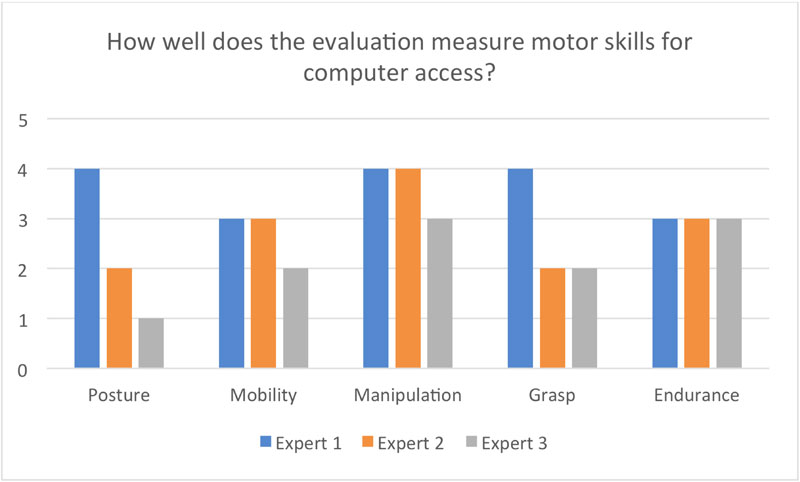

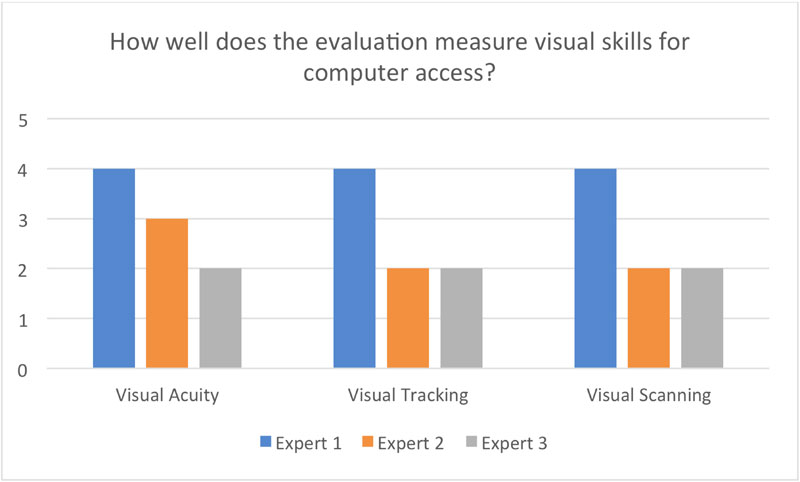

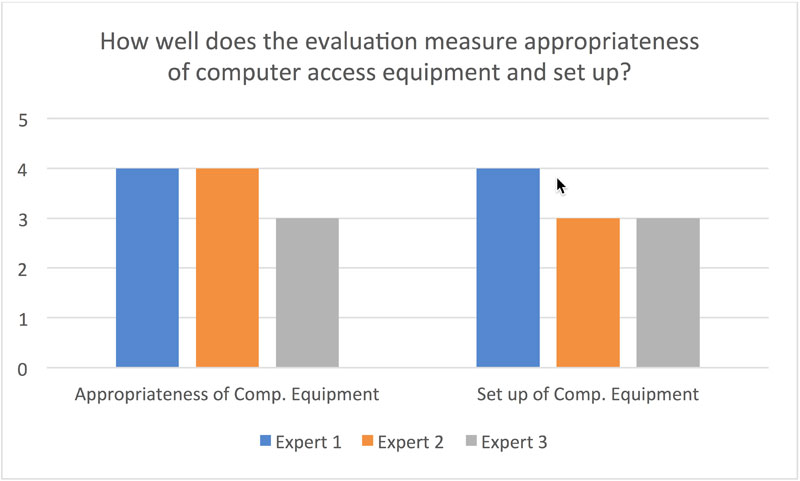

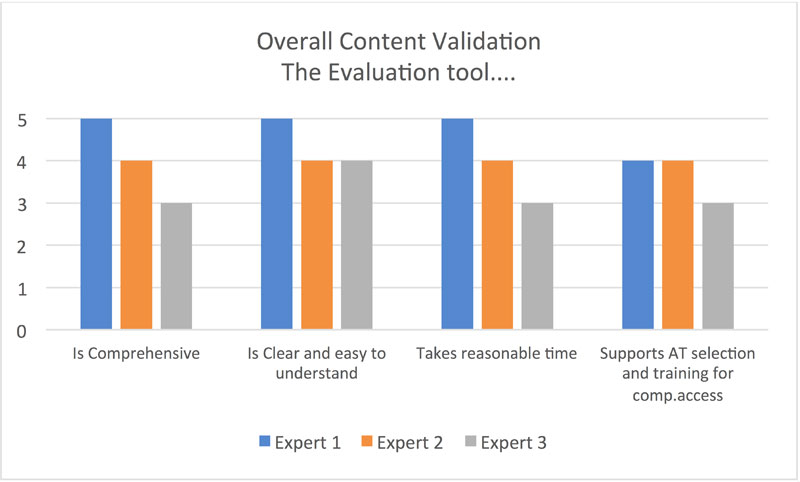

For the content validation, experts 1 and 2 indicated that most of the key computer access variables were measured by the tool “moderately well to “very well” (See figures 1, 2 & 3), while expert 3 felt that many of the user skills were captured only “slightly” well. Suggestions for including additional skills were given. Experts 1 & 2 “agreed” to “strongly agreed” on the evaluation’s overall content (Figure 4).

Discussion

While the USAT-CA’s pilot test and content validation data show promise, further revisions to the tool are warranted prior to field testing. Key revisions to be made include addition of items, narrowing the response scale for improved interrater reliability, and changing the language of some items.

Effective selection of computer access interfaces continues to be a critical and challenging task. The USAT-CA, when developed, will be a useful resource in effective provision of computer access AT for individuals with disabilities.

| Participants (Clients) | Expert Agreement (n=3) | Cohen’s value | Significance (p<0.05) |

|---|---|---|---|

| Participant Video 1 | Expert 1 & Expert 2 | 0.24 | 0.001** |

| Expert 2 & Expert 3 | 0.20 | 0.008** | |

| Expert 1 & Expert 3 | 0.35 | 0.000** | |

| Participant Video 2 | Expert 1 & Expert 2 | 0.20 | 0.008** |

| Expert 2 & Expert 3 | 0.25 | 0.000** | |

| Expert 1 & Expert 3 | .011 | 0.821 |

Figure 2: Content validation of USAT-CA: Visual skills

References

Arthanat, S., Bauer, S. M., Lenker, J. A., Nochajski, S. M., & Wu, Y. W. B. (2007). Conceptualization and measurement of assistive technology usability. Disability and Rehabilitation: Assistive Technology, 2(4), 235-248.

Cook, A. M., & Polgar, J. M. (2015). Assistive technologies: Principles and practice (4th ed). Elsevier Health Sciences.