V2 - A New Industry Standard for Universal Interface Sockets to Allow Control of Mainstream Products from any Device/Interface Modality

ABSTRACT

Technologies change so quickly that assistive technologies simply cannot keep up. A new standard under development by the V2 working group of INCITS may provide a mechanism for addressing this problem in a powerful new way that would be stable over time. The standard is designed to provide a mechanism for any product (device or service) to allow other devices to act as an alternate interface for the product. Thus assistive technologies, PDAs and universal remote controls can be used to control arbitrary (conforming) products. It also opens the way for intelligent agent control of everyday appliances as well as net appliances. The standard is based on the concept of the "user interface socket" that allows modality independent control of the product. Smart homes and natural language control are discussed.

Keywords:

interface socket; remote control; interface; universal access; standard

BACKGROUND

The challenge being addressed by this standard was to develop a mechanism to allow arbitrary devices (TVs, thermostats, alarm clocks, network based services, etc.) to be able to describe their functionality in a fashion that was independent from the user interfaces on the products. This would then allow users to attach alternate interfaces to the product (also called "target" or "target product") as well as to control the target product from a remote location using an arbitrary device. Key to this approach is the concept of a user interface socket. This socket would provide access to all of the device's functionality without having to go through the target product's user interface.

For example, if all of the mass-market products in a home had a standard "interface socket" on them, and that "interface socket" was accessible over a home network, an individual would be able to control any product from any other room in the house. Also, if that "interface socket" was modality independent, the person could control the process with the visual interface on their laptop, an audio interface on their cell phone or a Braille interface on their assistive technology.

Although such an interface would be of great benefit to individuals with disabilities, there is essentially no likelihood that mainstream manufacturers would build such an "interface socket" into all of their products simply to make them accessible by individuals with disabilities. Thus, this "interface socket" had to have very compelling mass-market appeal. Therefore, the group paid special attention to creating a standard that would provide a value-add and a marketing advantage to products that contained such sockets.

Natural Language Interfaces

One key element is the ability to use such devices with natural language interfaces. Although it is not practical to build highly intelligent natural language interfaces into every product, it would be practical (and it will soon be possible) to create an affordable handheld device (perhaps built into a cell phone) which would accept speech input and could process natural language requests. It could then control various devices in the environment if those devices provided such a means for discovery and control. It could also allow a traveler to use their own language when controlling devices and services in foreign countries. To be effective, the devices would need to be able to expose their functionality in a way that would allow a natural language controller to understand and operate them without requiring the user to program a natural language controller for each product they encounter.

It would also be important that individuals be able to purchase the standard appliances today (or in the near future) that they intended to use with natural language controllers in the future. By purchasing devices with such "interface sockets", it would be possible for individuals to have all of their products become smarter and work better every time a new natural language controller or upgrade was released.

Mixed Interface Controllers

This type of interface also allows for mixed interface controller, which will probably be the most popular. There are some types of interface activities that lend themselves to command and some that lend themselves to direct manipulation. For example, it may be very convenient to say "please record The West Wing tomorrow night". However, when you are channel surfing it is unlikely that you would like to keep saying "channel up, channel up". It might be more likely that the person would say "turn the TV on" and then to use the up and down button on their phone or controller to be able to move through the channels.

The Standard

The standard being developed is platform agnostic and can be implemented on top of different software and networking platforms including Jini/Java, UPnP, HAVi, etc., running over wired or wireless connections such as HomeNet, Ethernet, FireWire, DSL, cable, 802.11, Bluetooth, or any interconnected combination. The architecture employs an implicit two-way synchronization model in which state information is shared between a target product and user device (URC).

|

|---|

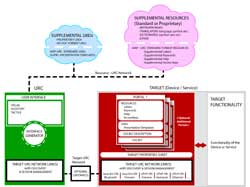

The architecture uses a combination of a "user interface socket", a "user interface implementation description" (UIID), and "supplemental resources" in order to provide a maximally flexible system which puts a minimum burden on target service or product manufacturers (Figure 1). It also allows target manufacturers to create very custom, stylized interfaces as they wish while still maintaining a highly flexible and abstract user interface infrastructure which allows for all visual or all verbal or all tactile interfaces as called for by any particular individual, environment, task or situation.

User Interface Socket

The user interface socket is a low level description of a particular target product or service and specifies the input and output requirements. The socket describes the functionality and state of the target as a set of typed data and command elements. The data elements on the socket provide all of the data that are manipulated by or presented to a user (including all functions, data input, indicators, and displays). Together with the commands, they provide access to the full functionality of the target device or service in a non-modality specific form (e.g. it does not assume a particular type of interface or display). Elements in the user interface socket may have constraints such as maximum and minimum values, dependencies that specify when the element is readable and writeable by the user, and semantic tagging. Dependencies are used to express relationships among the elements of the user interface socket. For example, a command may only become active when values for specific data elements have been set by the user. For example the command to "withdraw" must be preceded by or accompanied by 1) the user's identity, 2) user authentication, 3) the amount of money expressed in multiples of 20 with an upper limit of 200, 4) the type of account the money should be withdrawn from, and 5) if the person has multiple accounts of that type, the specific account, name or number.

User Interface Implementation Description (UIID)

A UIID is a user oriented representation of a target that maps some or all of the user interface socket elements to interaction (presentation and user input) constructs. It provides a structure into which the elements of the presentation are embedded. A given target product can have any number of UIIDs which are created by the manufacturer, by third parties, or by users. UIIDs could be provided for target products or services that are tailored to popular personal devices (PDAs, cell phones, etc.) or to a custom controller that is designed by the manufacturer to work specifically with their products. "Simple access UIIDs" could also be provided for individuals desiring access to only the basic functionality of a product. Compliance with this standard, however, would require that a modality independent UIID (a "presentation template") exists that lets users access all of the functions of a target while making no assumptions about the form in which the interface will be presented to the user.

Supplemental Resources

Interface text and other interfaced resources can be stored independently of the UIIDs as supplemental resources which are referenced by the UIIDs. These resources may include labels, help text, graphics, multimedia elements, translations of labels, etc., into different languages, and translations into different language levels. These resources may be provided by the device or be downloaded from the internet. They could be located on the Web server of the target device manufacturer, the URC manufacturer, third party metadata sites, or user groups. A copy of the standard is available from INCITS V2 [1].

Prototyping work is currently underway at the University of Wisconsin [2] and at Georgia Tech. Prototypes to date have included implementations on top of different networking platforms and technologies. Target devices at the University of Wisconsin have included a commercial VCR, simulations of a TV and video player, lights and a fan. Georgia Tech efforts are reported elsewhere at this conference.

REFERENCES

- InterNational Committee for Information Technology Standards (INCITS), Technical Committee V2 on Information Technology Access Interfaces, www.incits.org/tc_home/v2.htm .

- Zimmermann, G., Vanderheiden, G. C., & Gilman, A. (2002). Prototype Implementations for a Universal Remote Console Specification. CHI 2002 Conference on Human Factors in Computing.

ACKNOWLEDGEMENT

This work funded by the National Institute on Disability and Rehabilitation Research (NIDRR), US Dept of Education under grant numbers H133E030012 IT RERC and H133E980008 IT RERC. The opinions expressed here do not necessarily represent the policy of the U.S. Dept. of Education.

CONTACT

Gregg Vanderheiden, Ph.D.

gv@trace.wisc.edu

Trace

Research and Development Center,

2107 Engineering Centers Bldg,

1550 Engineering

Drive,

Madison, WI 53706

(608) 262-6966