An Assistive Navigation Paradigm for Semi-Autonomous Wheelchairs Using Force Feedback and Goal Prediction

John Staton, MS, Manfred Huber, PhD

ABSTRACT

As computer technology advances and facilitates an increased amount of autonomous sensing and control, the interface between the human and autonomous computer systems becomes increasingly important. This thesis investigates technologies aimed at facilitating the integration of autonomous path planning capabilities with intuitive human control using a force-feedback interface.To this point, harmonic function path planning has been integrated to create a new, more robust force-feedback paradigm. A force-feedback joystick has been used to communicate information from the user to the robot control system which uses this to infer and interpret the user’s navigation intentions as well as from the harmonic function-based autonomous control system to the user to indicate the system’s suggestions. To demonstrate and evaluate its capabilities his new paradigm has been implemented within the Microsoft Robotics Studio framework and tested in a simulated mobile system, with positive results.

Keywords:

assistive technologies, smart wheelchairs, force feedback, harmonic functions, robotics

RELATED WORK

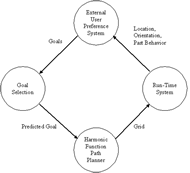

Illustration 1 - Outer Loop Diagram, indicating progression from the external user preference system, to the goal selection system, to the harmonic function path planner, into the run-time system. (Click for larger view)

Illustration 1 - Outer Loop Diagram, indicating progression from the external user preference system, to the goal selection system, to the harmonic function path planner, into the run-time system. (Click for larger view) Research in applying force-feedback technology to artificially intelligent wheelchairs has been limited, but can still serve as an example of the potential for force-feedback as applied to artificially intelligent wheelchairs.

The Luoson III, out of the National Chung Cheng University in Taiwan, was designed by Ren Luo, Chi-Yang Hu, Tse Min Chen and Meng-Hisien Lin to aid a blind user in his or her everyday activities (1).The Luoson III uses an ultrasonic sensor arrangement on the wheelchair to gather environmental data, and uses a Microsoft Force Feedback Pro joystick to play force-feedback effects.The system is set up to use Grey theory (2) for motion prediction to predict the trajectory of the wheelchair and if the wheelchair is going to strike an obstacle, then a force effect is played in an attempt to repel the user away from the obstacle (3).

Illustration 2 - Run Time Loop Diagram, indicating a progression of the wheelchair’s location and orientation into a system to generate the force effect, to the joystick for force effect playback, and whose output progresses to the motors which translate the joystick’s position into motor commands, which effects the wheelchair’s location and orientation. (Click for larger view)

Illustration 2 - Run Time Loop Diagram, indicating a progression of the wheelchair’s location and orientation into a system to generate the force effect, to the joystick for force effect playback, and whose output progresses to the motors which translate the joystick’s position into motor commands, which effects the wheelchair’s location and orientation. (Click for larger view) Another wheelchair system out of the University of Pittsburgh, based on the work by James Protho, Edmund LoPresti and David Brienza, provided a description of two differing philosophies for force-feedback effect design (4, 5).The two philosophies presented incorporate the ideas of “passive assistance” versus “active assistance”, where passive assistance results in a joystick that resists movement towards an obstacle, while active assistance causes the joystick to actively push away from the obstacle.Their pilot work indicated that “active assistance” gave the users the maximum efficiency (4). The designers tested their algorithms by using a virtual reality system, and their results showed fewer collisions when using the system versus driving without it.

Finally, A. Fattouh, M. Sahnoun and G. Bourhis at Metz University in France created their own intelligent powered wheelchair reminiscent of the Luoson III (6, 7)A series of distance sensors is utilized to create a feedback force vector that reflects away from the obstacle(s) perceived by the sensors.This system was implemented in a virtual reality simulation system using a Microsoft Sidewinder Force Feedback 2 joystick and resulted in fewer collisions, while no significant influence was found on drive time or drive distance.

METHODOLOGY

The objectives for the assistive, semi-autonomous force-feedback paradigm introduced in this thesis for an intelligent wheelchair are naturally driven by two functionalities: the ability to infer the user’s intention in terms of their navigation goals, and the ability to help direct the user towards their intended goal and away from potential hazards.This natural distinction is reflected in this methodology through a design consisting of on two interconnected loops: an outer loop that attempts to infer the intended goal location of the user (Illustration 1), and an inner loop that directs the user towards the inferred goal (Illustration 2).

Photograph - This photograph shows the implementation setup, with two monitors, one for the simulation window and one for the “Dashboard” GUI interface, and the Microsoft Sidewinder Force Feedback Pro joystick. (Click for larger view)

Photograph - This photograph shows the implementation setup, with two monitors, one for the simulation window and one for the “Dashboard” GUI interface, and the Microsoft Sidewinder Force Feedback Pro joystick. (Click for larger view) The objective of the outer procedural loop is to estimate the desired navigation goal of the user based on the information available to the system and to provide this estimate to the run-time loop, enabling it to direct the user towards that goal.The outer loop utilizes run-time data of the user’s position and behavior together with information about the set of potential goals in the environment provided by an external user preference system to predict the intended goal location.This prediction is made by comparing a set of recent user actions to the actions necessary for approaching each individual goal.The more similar the user actions are to the path that would approach a goal, the more likely that goal is the user’s intended destination.Once the most likely goal is selected, the system calculates the harmonic function for that goal, which it passes in a discretized grid format to the Run-Time System.This process is repeated when the user’s intended goal needs to be recalculated based on the new location, orientation and behavioral data from the user; this repetition can occur periodically according to a specific rate, or can potentially be event-driven, repeating when the user actions no longer match with the path to the selected goal.

Screenshot 1 - This screenshot shows the “Dashboard” GUI interface, with path planning display. (Click for larger view)

Screenshot 1 - This screenshot shows the “Dashboard” GUI interface, with path planning display. (Click for larger view) The Run-Time Loop runs as the user is directing the wheelchair around the environment.In this loop location and orientation data are first acquired from the wheelchair and then used to produce a force vector (derived in terms of a direction and a “risk” factor, which will be discussed in the next sections).The direction of the force vector is a translation of the gradient of the harmonic function at the user’s location.The amount of “risk” that the user’s action incurs is a heuristic whose factors incorporate the velocity of the wheelchair, the potential value of the harmonic function at the user’s location, and the next potential value of the harmonic function in the direction that the user is heading.This vector is then translated into a force-feedback effect which is played on the user’s joystick.The joystick’s position is finally used to drive the wheelchair’s motors and the loop repeats.In this process the path prediction of the autonomous system only indirectly influences the wheelchair’s behavior by providing guidance to the user. The actual drive commands are always provided by the user (although the user could opt to simply follow the force vector, and thus follow the harmonic function path).

Screenshot 2 - This screenshot shows the simulation environment from the point of view of the user as sitting on the simulated wheelchair. (Click for larger view)

Screenshot 2 - This screenshot shows the simulation environment from the point of view of the user as sitting on the simulated wheelchair. (Click for larger view) The implementation of the design methodology has been created on a Dell Dimension 8250, with a 2.66 GHz Pentium 4 CPU running Windows XP, using Microsoft Visual Studio 2005 and the C# programming language (chosen because of its connection with DirectX and DirectInput). The force-feedback joystick used for the implementation was a Microsoft Sidewinder Force Feedback 2.This joystick was chosen because of its use in previous academic explorations in force-feedback research (1, 6).The entire implementation has been implemented on top of Microsoft Robotics Studio.(Photograph, Screenshot 1, Screenshot 2)

RESULTS

In an attempt to better understand the ramifications of the choices made in the design and implementation of this thesis project, experiments have been undertaken.The two most crucial portions of the project have been tested: goal selection in the outer loop and the effectiveness of the force feedback effects in the run-time loop.The goal selection system was evaluated by testing different scenarios that may occur: a combination of goal similarity, user actions, and initial starting weight for the intended goal.The goal selection system was accurate in situations where it was reasonable to expect it to be so; the only issue that arose was in the experiments where the goals were very similar and user actions were not enough to differentiate between them.In these cases, the initial weights of the intended goal were the deciding factor in which goal was predicted by the system.Thus we can see that with this goal prediction metric goal clustering forces the system to be more reliant upon the external system of user preferences.

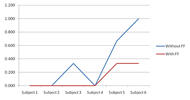

Graph 1 - This graph shows the number of collisions incurred for each subject, both with and without force feedback suggestions. In all cases, subjects incurred fewer collisions with force feedback. (Click for larger view)

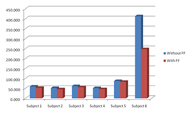

Graph 1 - This graph shows the number of collisions incurred for each subject, both with and without force feedback suggestions. In all cases, subjects incurred fewer collisions with force feedback. (Click for larger view) Six test subjects were used for the user testing portion of the experiments.All subjects were given time before the test runs to familiarize themselves with the system, both with force-feedback and without.Three test runs were given without force-feedback, and three with, and the runs were alternated in an attempt to avoid a bias in terms of the user becoming more familiar with the system as the experiments wore on.All subjects showed improvements in the length of time it took them to complete the test course, with an average improvement of 14.5% (Graph 1).All users also showed improvement in the number of collisions incurred while completing the course (Graph 2).All users indicated that the force feedback suggestions were helpful to them in avoiding obstacles and approaching the goal, and all users showed excitement for the potential of the concept after testing the system.

CONCLUSION

Graph 2 - This graph shows the amount of time taken to complete the test course for each subject, both with and without force feedback suggestions. In all cases, subjects completed the course in less time with force feedback. (Click for larger view)

Graph 2 - This graph shows the amount of time taken to complete the test course for each subject, both with and without force feedback suggestions. In all cases, subjects completed the course in less time with force feedback. (Click for larger view) This thesis developed and implemented a novel concept for goal prediction and force-feedback in a semi-autonomous wheelchair, and evaluated its effectiveness on a simulated system.The ultimate motivation behind this thesis was to develop technologies that could help people who have disabilities that require them to use a wheelchair.The idea was to create a system that assisted the user, rather than replace the user.We believed that force-feedback would be the most appropriate and efficient method of assisting the user in maneuvering in the environment because of its intuitive nature and its potential to avoid the distractive effects of other means of feedback, such as GUIs, auditory interfaces, etc.In addition, this belief is supported by previous research, both in and outside the field of assistive technology that indicated, unequivocally, that force-feedback is simple, intuitive, and efficient in assisting users with steering tasks.Following these considerations, a design was developed and implemented that addresses all of the objectives: the ability to infer a user’s goal based on what the system can know about the user’s intentions, the ability to guide the user towards that goal using a combination of force-feedback effects and harmonic function path planning, and the ability to assist the user in avoiding collisions with obstacles.The experiments performed with the system indicate a positive response from all users, whose ages and abilities ranged from young and healthy to elderly and with some hand coordination issues.

REFERENCES

- Luo, R. C., Hu, C. Y., Chen, T. M., Lin, M. H. (1999). Force reflective feedback control for intelligent wheelchairs. Intelligent Robots and Systems, 1999. IROS’99 Proceedings. 1999 IEEE/RSJ International Conference on, 2. 918-923.

- Deng, J. L. (1989). Introduction to Grey system theory. The Journal of Grey System, 1(1). 1-24.

- Ren, C., Luo, T. M., Chen, C. Y., Hu, Z., Hsaio, H. (1999). Adaptive Intelligent Assistant Control of Electrical Wheelchair by Grey-Fuzzy Decision Making Algorithm. Proceedings of 1999 IEEE International Conference on Robotics and Automation, 3. 2014-2019.

- Protho, J. L., LoPresti, E. F., Brienza, D. M. (2000). An Evaluation of An Obstacle Avoidance Force Feedback Joystick. Research Slide Lecture for Wheelchair University. University of Pittsburgh, PA.

- Protho, J. L., LoPresti, E. F., Brienza, D. M. An Evaluation of an Obstacle Avoidance Force Feedback Joystick. Rehabilitation Engineering & Assistive Technology Society of North America. Pittsburgh, PA.

- Fattouh, A., Sahnoun, M., Bourhis, G. (2004). Force feedback joystick control of a powered wheelchair: preliminary study. Systems, Man and Cybernetics, 2004 IEEE International Conference on, 3. 2640-2645.

- Bourhis, G., Sahnoun, M. (2007). Assisted Control Mode for a Smart Wheelchair. Proceedings of the 2007 IEEE 10th International Conference on Rehabilitation Robotics. Noordwijk, The Netherlands.